Introduction

Everyone is talking about AI agents, but far fewer people are actually using them.

Before building the next generation of AI features into Productive, we wanted to understand where our customers actually stand. How are agency and professional services teams using AI today? What do they think agents can do? What would they hand off, and what would they never let go of?

We surveyed 256 Productive users across individual contributors, managers, and admins in the UK, US, Australia, the Netherlands, and beyond. To complement the numbers, we also ran in-depth interviews with nine people in senior roles across agencies and professional services firms. Here’s what we found.

1. Teams Use AI Every Day — But Not for Everything

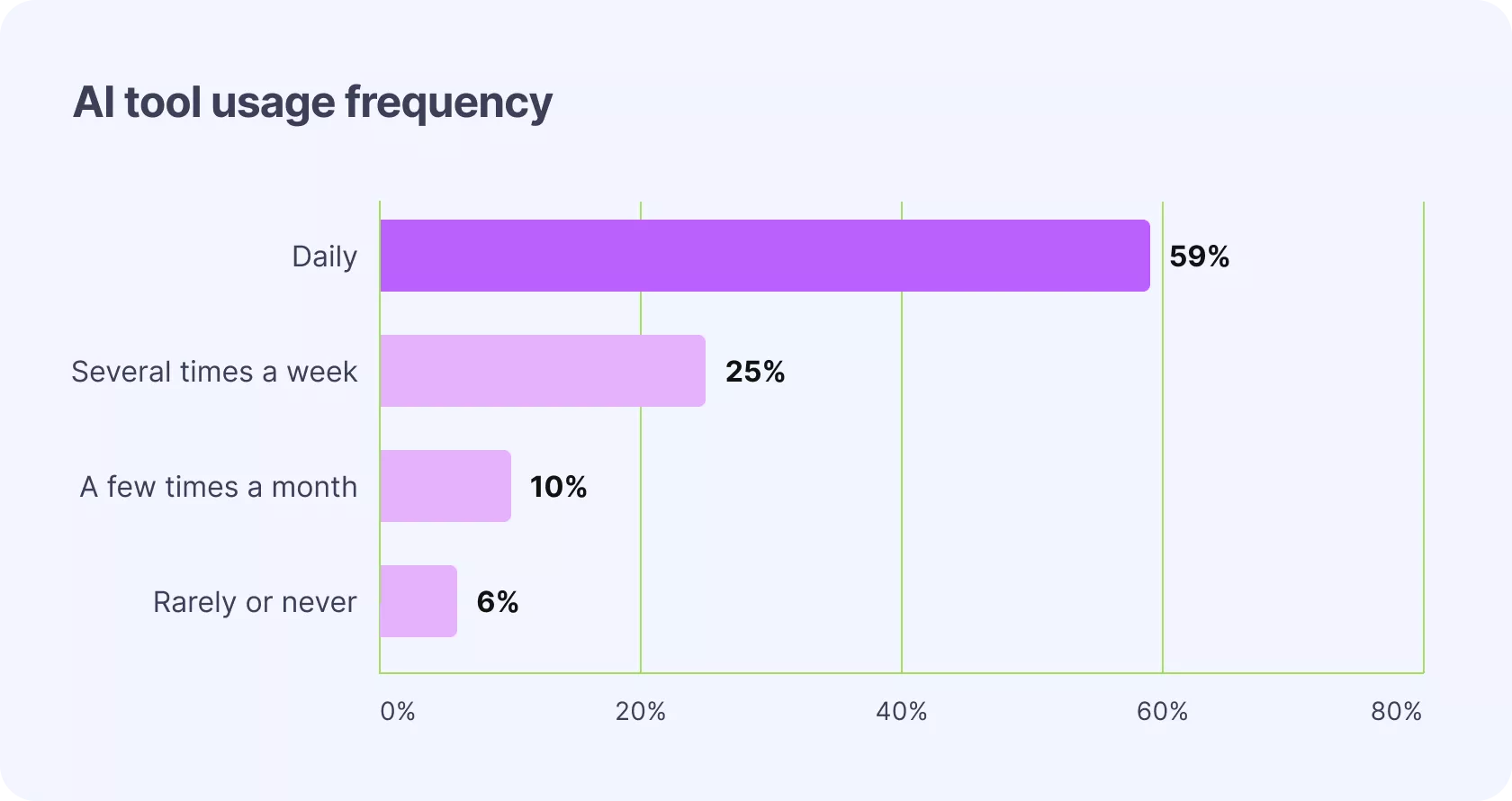

AI tool usage is essentially universal at this point. Across all roles — admins, managers, and individual contributors — the vast majority of respondents use AI tools daily. This held true regardless of job title, company size, or seniority.

What they’re using it for tells a more interesting story.

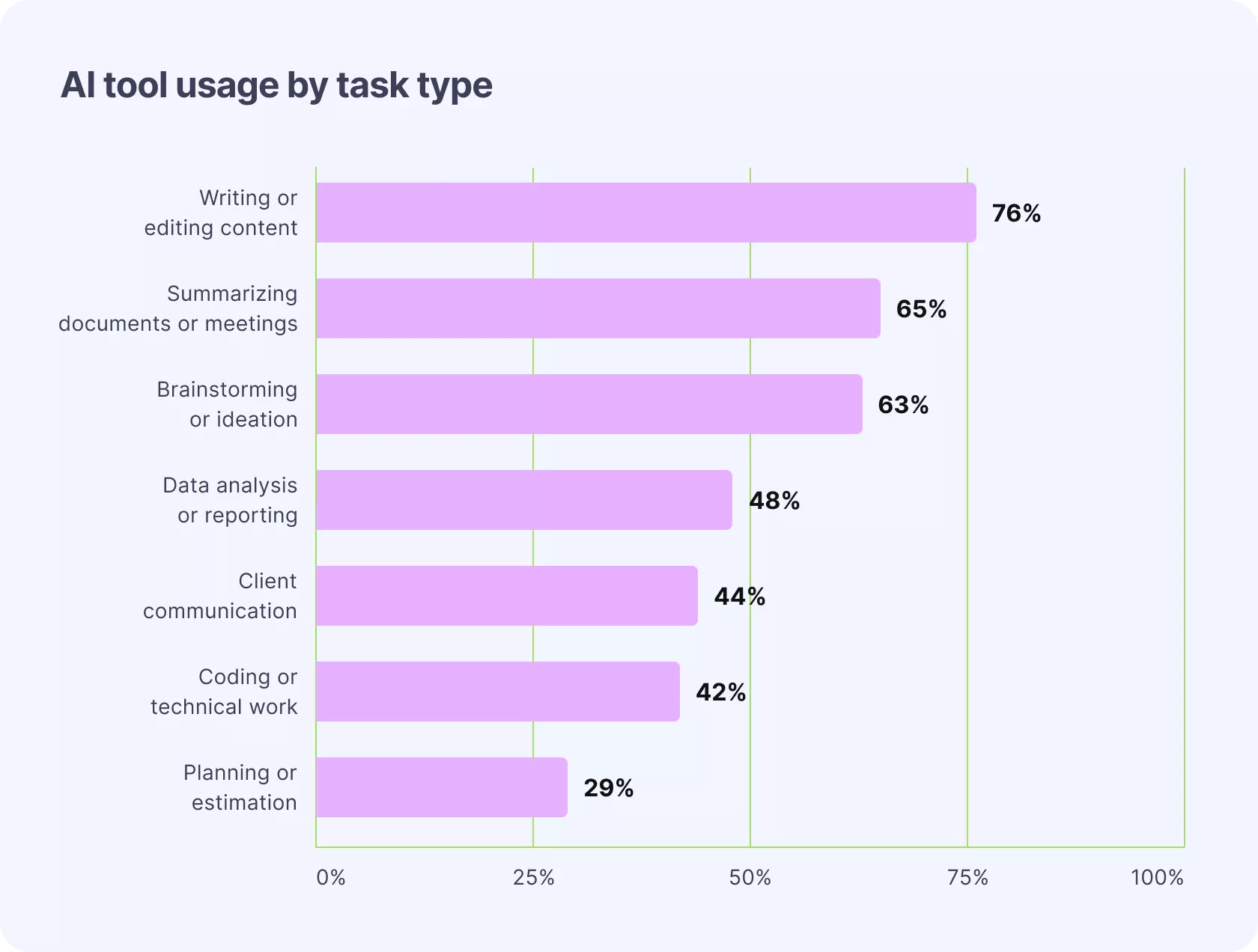

Writing and editing content is the top use case by a significant margin. Summarizing documents or meetings comes second, followed by brainstorming and ideation, data analysis, and client communication.

The task people rely on AI for least? Planning and estimation. This gap — between daily AI use and the specific task of project planning — turns out to be one of the most important findings in the whole study.

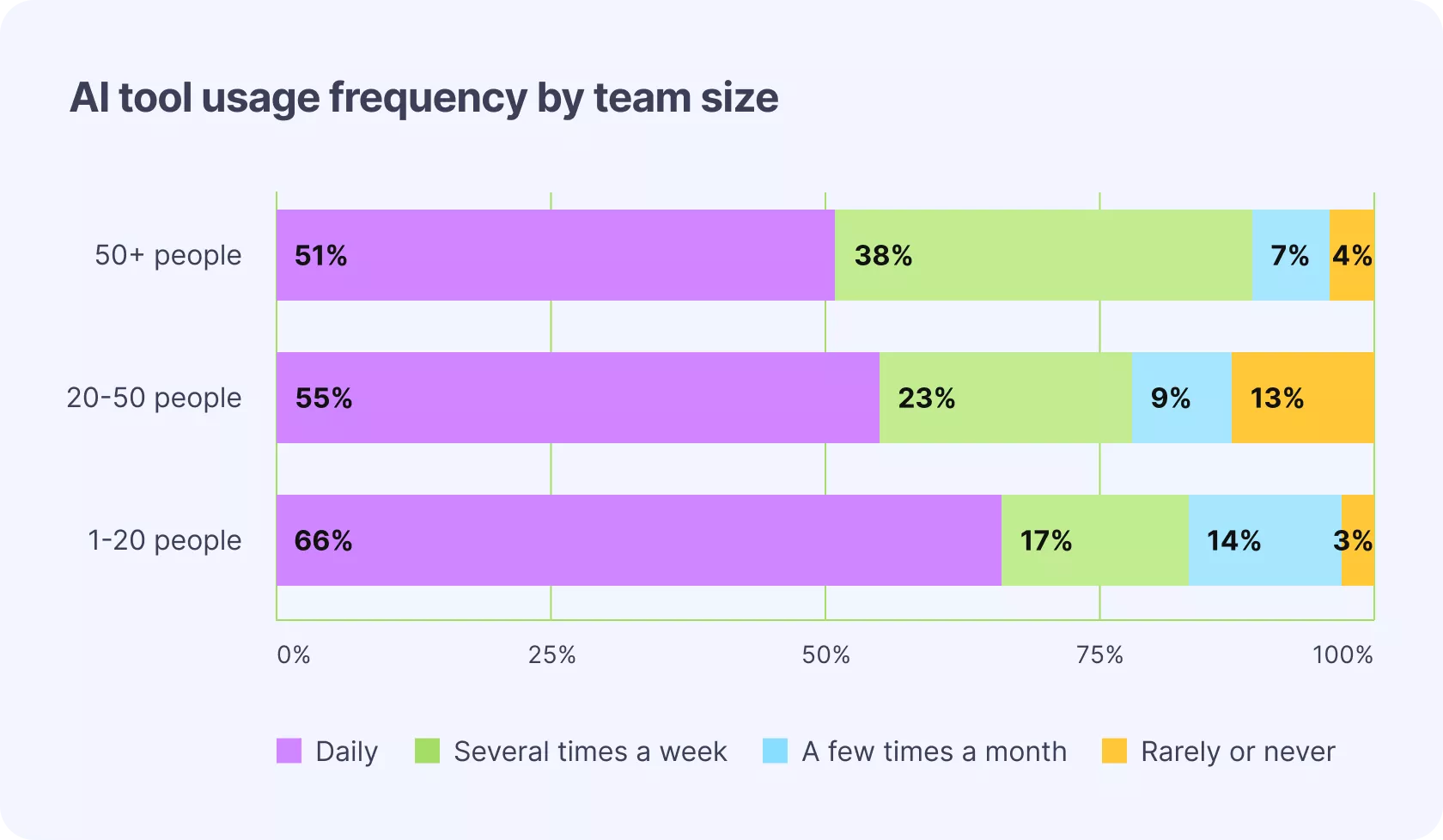

Larger organizations use AI slightly less intensively

Larger organizations (50+ people) tend to use AI daily to a slightly lesser extent than smaller ones. More elaborate processes and less time pressure may be contributing factors, though the difference is modest.

The early builders

A small but notable group (mostly admins at larger or more technically sophisticated firms) have started experimenting with custom AI integrations: pulling project data into AI tools, building lightweight resource request workflows, using AI to interpret reports.

These are early-stage experiments, and the teams doing it are clearly ahead of the curve. Most respondents said they have ideas for agents but lack the time and technical expertise to build them.

2. Most People Think AI Agents Are Chatbots

We asked respondents directly: how do you define an AI agent? The results reveal a genuine gap in how people understand the technology.

In interviews, experienced users drew a clean distinction: a chatbot responds when prompted; an agent acts on your behalf. The survey tells a more mixed story, though. Statistically, more users described agents as “a system that can act on my behalf,” but the effect is small. Nearly 1 in 3 respondents described AI agents in terms that are essentially equivalent to how they’d describe a chatbot.

Proactive capability — the idea that an agent might act without being explicitly asked — is the least common association. Most users expect to still “call” the agent themselves.

Role shapes how people describe agents, but not how well they understand them

Admins were slightly more likely to emphasize agent autonomy. Managers tended to think in terms of what agents could do for them. Individual contributors were more likely to describe generic AI tools and express skepticism. But no group had a clearly accurate mental model across the board.

Interestingly, heavy AI users are no better at defining agents than occasional users. Frequency of use doesn’t build conceptual clarity about what agents are.

Here’s a sample of how users described AI agents in their own words:

- “An autonomous piece of software that handles a task, taking its own decisions given a specific context.”

- “Like Clippy, but more helpful and accustomed to your usual behaviours, tone, and type of work.”

- “A robot butler that can be tasked semi-autonomously to complete tasks or coordinate with other systems.”

- “A nondeterministic piece of garbage that will randomly blow away your entire hard drive.” (The skepticism is real. —ed.)

3. AI Agents Are Seen as Capable, Just Not Human

We asked respondents to rate AI agents on a series of paired adjectives: friendly vs. unfriendly, intelligent vs. unintelligent, ethical vs. unethical, human-like vs. mechanical, and so on.

Three clusters emerged:

- High-rated: Friendly, Active, Kind, Positive, Intelligent. People see AI agents as capable, cooperative, and approachable.

- Mid-rated: Ethical, Responsible, Simple, Warm. Respondents aren’t convinced agents are particularly ethical or warm, and they don’t find them simple to use.

- Lowest-rated: Human-like. This sat clearly apart from everything else.

People are not anthropomorphizing AI. They don’t want or expect agents to feel human. They want tools that are capable and helpful. They don’t need them to act like colleagues..

4. The Tasks People Are Ready to Delegate — And the One Hard Line

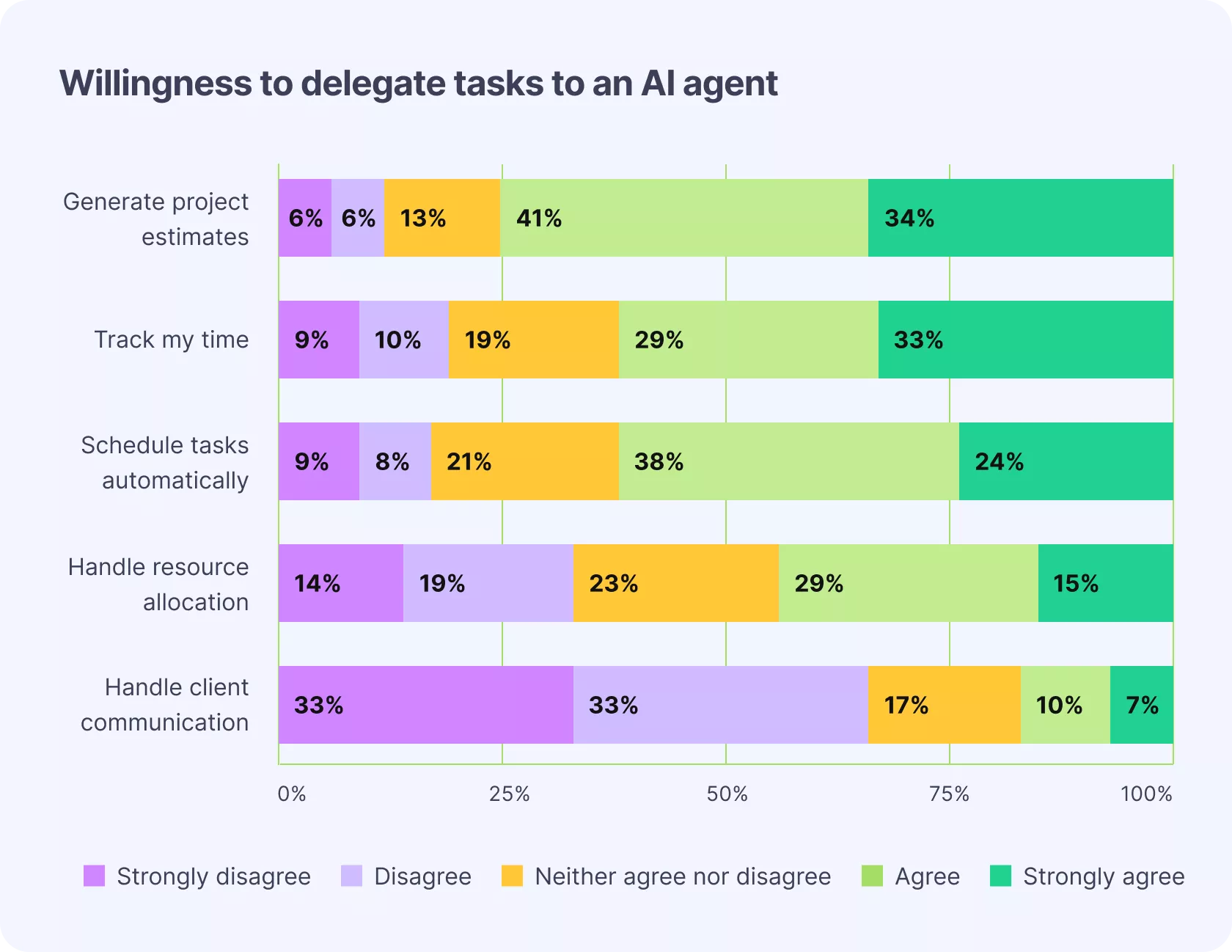

We asked respondents: “I would trust an AI agent to…” and presented five task types. The results are striking in their consistency.

Project estimation came out on top — and it’s the task users currently use AI for least. There’s a clear unmet need here. People recognize that estimation is data-driven, reversible, and time-consuming. They want help with it, but current tools haven’t addressed it.

Time tracking and task scheduling also scored high. Both are operational, internal, and easy to check before anything goes out the door.

Resource allocation sits in the middle. People are open to it, but it involves judgment calls and affects colleagues’ workloads directly. Larger organizations are particularly cautious here.

Client communication is the clear exception. Only about 1 in 4 respondents would trust an agent with client-facing messages — and in interviews, almost no one expressed willingness to hand this off entirely. This boundary held firmly across roles and company sizes.

Why people won’t hand client communication to AI

Three concerns came up consistently in interviews:

Emotional disconnect and relationship damage. Client relationships are built through genuine personal interaction. Using AI to handle that — even if the output is technically correct — risks eroding the relationship itself. Several people described wanting to “gatekeep” AI to operational work and keep humans in the loop for anything relationship-bearing.

Brand and voice. Multiple people mentioned experiences where AI-assisted communication was recognizable as such — to the client. One described a client flagging a long dash and bolding pattern as “very GPT.” Sending something that doesn’t sound like you, and getting a poor response as a result, is a real and already-experienced risk.

Perceived value. If clients notice AI is handling their communication, they start wondering what they’re actually paying for. Several people raised this directly: using agents for client-facing work risks undermining the premium that comes with expert human service.

None of this means people want zero AI involvement in communication. Several said they’d welcome AI-assisted drafting — with their own tone of voice applied, and a human reviewing before anything sends.

5. Trust in AI Agents Depends Almost Entirely on Control

Across interviews and survey responses, this is the clearest pattern in AI agent trust: the more control people have over what the agent does, the more willing they are to use it.

“Human in the loop” wasn’t just a preference. It was described as an expected standard. People want to:

- See what the agent is doing and why

- Review outputs before they take effect

- Be able to pause, adjust, or undo actions

- Know which data the agent has access to

One respondent described the trust-building process this way: starting with full review of every action, and gradually expanding autonomy as confidence builds. Another framed it as the difference between a junior employee who needs supervision and a senior one who can act independently — and noted that AI hasn’t yet earned senior status.

6. What People Worry About When They Worry About AI

Only about 1 in 10 respondents reported having no concerns about AI agents. The other 90% were cautiously optimistic, but with specific, articulable worries.

Accuracy and reliability was the most commonly cited concern. Respondents mentioned hallucinations, failure to account for full context, and the need to check everything.

Other concerns, roughly in order of frequency:

- Data privacy and security: Which model is being used? Where is data stored? Is it GDPR-compliant? What happens if an agent is “over-permissioned” and surfaces data it shouldn’t?

- Unintended or irreversible actions. Particularly among contributor-level respondents, who felt most exposed to AI acting on their behalf without explicit approval.

- Erosion of human skills: Several people raised the concern that offloading cognitive work to AI might make them worse at their jobs over time.

- Homogenization of output: Particularly among admins, who noted that AI-generated content increasingly sounds the same, reducing individual voice and style across the industry.

- Job security: Present, though less dominant than might be expected.

- Ethical and environmental impact: More prominent among managers, who raised questions about the carbon cost of AI and whether automating “pointless” work actually serves anyone.

One user put the accuracy concern bluntly: “It’s like an intern that acts like they are a senior employee.”

Another framed the data risk well: “If an agent is over-permissioned, it might have the power to do things it shouldn’t — like accidentally sharing a confidential salary spreadsheet because you asked it to ‘summarize recent attachments.'”

7. The Tasks People Actually Want Agents to Handle

When we asked respondents what they’d want AI agents to handle, the responses were specific and consistent. The tasks that came up most often share a common profile: they’re repetitive, data-driven, and time-consuming — the kind of work that has to get done but rarely requires the judgment or relationship skills that define good professional services work.

Across project management, finance, resourcing, and beyond, the ask is essentially the same: handle the overhead so we can focus on the work that actually matters.

Project management

Updating task statuses based on comments and activity, surfacing action points, sending reminders for time logging and task completion, summarizing project health, generating project or budget proposals from documents or rules.

Reporting and intelligence

Interpreting reports on demand, generating time or deal reports by person or project, flagging projects that might be at financial risk.

Resourcing

Checking whether a person (or someone with specific skills) has capacity in a given timeframe, generating resourcing summaries, modeling potential resourcing scenarios.

Finance

Drafting invoice templates, flagging invoices for review, sending payment reminders according to predefined rules.

Time tracking

Drafting time entries from calendar activity, meeting transcripts, or task interactions — without manual logging.

Meeting capture

Recording and transcribing calls, converting discussions into tasks, maintaining a project knowledge base that a colleague can query if the original owner is unavailable.

Methodology

This research was conducted by Productive’s UX Research team in Q1 2026.

Survey: 256 Productive users across agency and professional services roles (86 individual contributors, 7 coordinators, 61 managers, 102 admins). Average organization size in the sample: 129 seats. Countries represented include the UK, US, Australia, the Netherlands, Germany, Belgium, Canada, Croatia, and others.

Interviews: 9 in-depth, 30-minute interviews with users in senior or leadership roles (6 admins, 2 managers, 1 senior profitability manager). Organizations ranged from under 20 seats to over 50.

Analysis: Quantitative data analyzed using descriptive statistics, ANOVA, Friedman test, chi-square, exploratory and confirmatory factor analysis, and multiple regression. Qualitative data analyzed via thematic and content analysis.

Achieve Your Firm’s

True Potential

Switch from multiple tools and spreadsheets to one scalable AI platform.